8. Managing GPUs

CloudVeneto provides some GPUs (Graphics Processing Units). In this section we describe how the GPUs allocated to the projects:

HPC-Physics

CONVECS

PhysicsOfData-students

can be used.

Note

There are actually other Nvidia GPUs integrated in CloudVeneto, but these are reserved to specific projects (the ones who paid for these resources). The instructions in this section are not relevant for these other GPUs.

Using a GPU means accessing a virtual machine which has full access and direct control of such GPU device.

GPU instances, i.e. virtual machines which have access to one or more GPUs can be created only from the HPC-Physics / CONVECS / PhysicsOfData-students project. So, first of all, you need to request the affiliation to one of these projects, if you are entitled to it (see Apply for other projects for the relevant instructions).

Important

You can ask to be affiliated to the HPC-Physics project if you are a Unipd Physics Dept. or INFN Padova user.

You can ask to be affiliated to the CONVECS project for INFN related scientific activities.

You can ask to be affiliated to the PhysicsOfData-students project if you are a student of the Unipd master’S degree in Physics Of Data.

The GPUs avilable in these projects are:

8 GPUs RTX PRO 6000 Blackwell Server Edition (usable in the CONVECS project)

4 GPUs Nvidia V100 (usable in the HPC-Physics project)

8 GPUs Nvidia Tesla T4, each one coupled with 15 CPU cores (usable in the HPC-Physics project)

4 GPUs Nvidia Tesla T4, each one coupled with 8 CPU cores (usable in the PhysicsOfData-students and HPC-Physics projects)

1 GPU Nvidia Quadro RTX 6000 (usable in the HPC-Physics project)

2 GPUs Nvidia TITAN Xp (usable in the HPC-Physics project)

1 GPU Nvidia GeForce GTX TITAN (usable in the HPC-Physics project)

Warning

Please note that the members of the PhysicsOfData-students project have the priority on the 4 T4 GPUs each one coupled with 15 CPU cores. Users of the HPC-Physics can use such GPUs, but they must release them within 2 days if requested by other users with higher priority.

8.1. Reserving a GPU

Before creating a VM with a GPU, you need to reserve it. This can be done using a reservation system integrated in the dashboard.

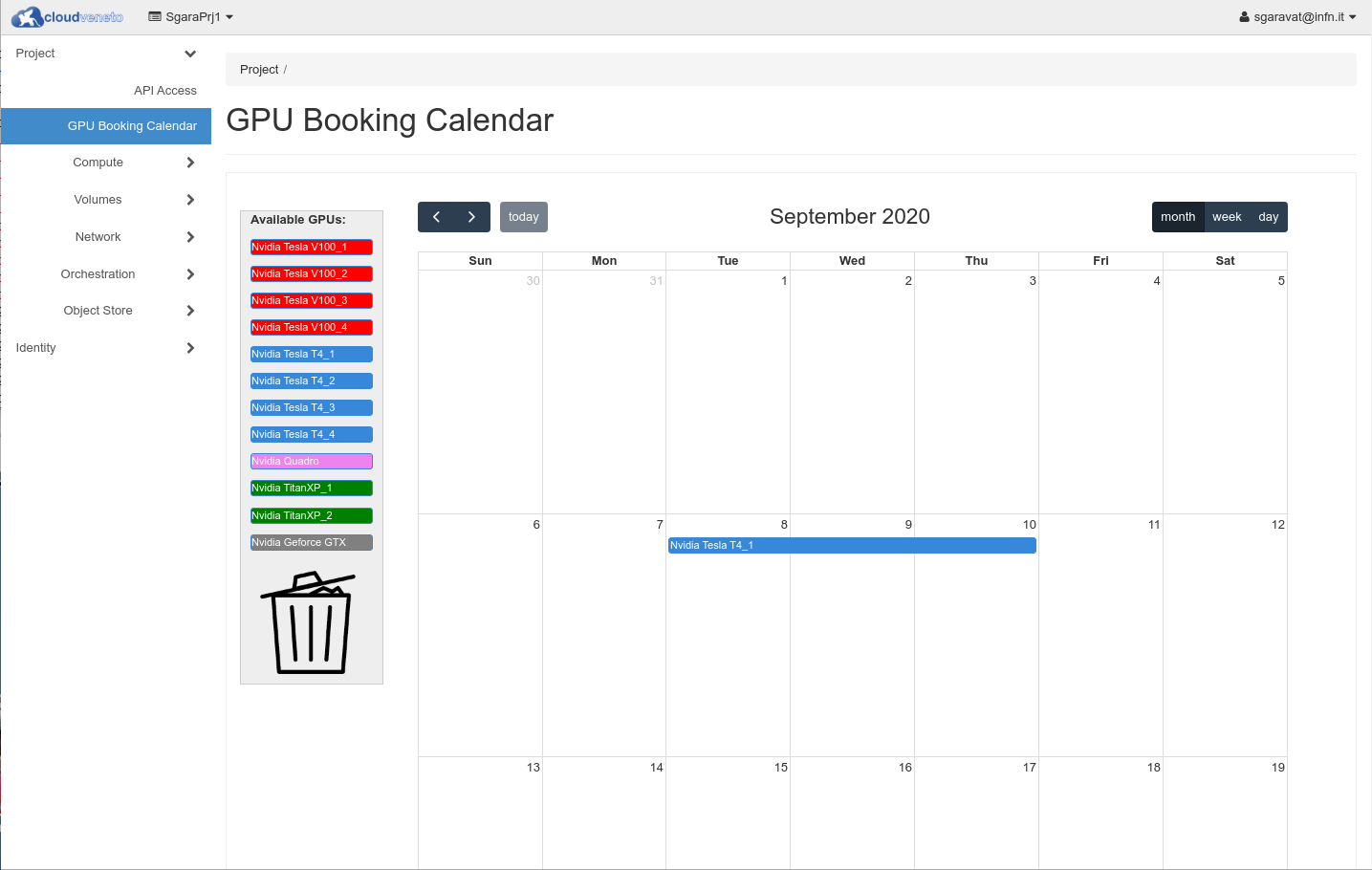

Using the Dashboard, click on GPU Booking Calendar.

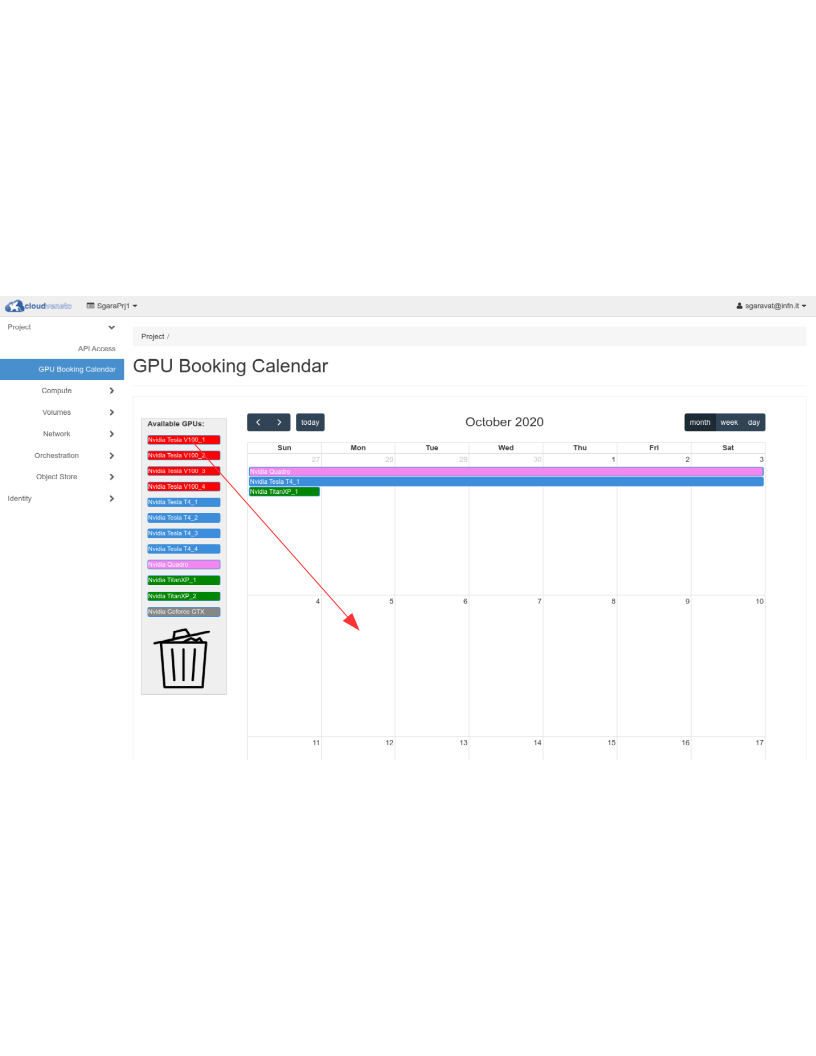

Let’s suppose that you want to reserve a Tesla V100 GPU from Oct 5 to Oct 9.

Move the desired GPU to the first day of the reservation (October 5, in our example)

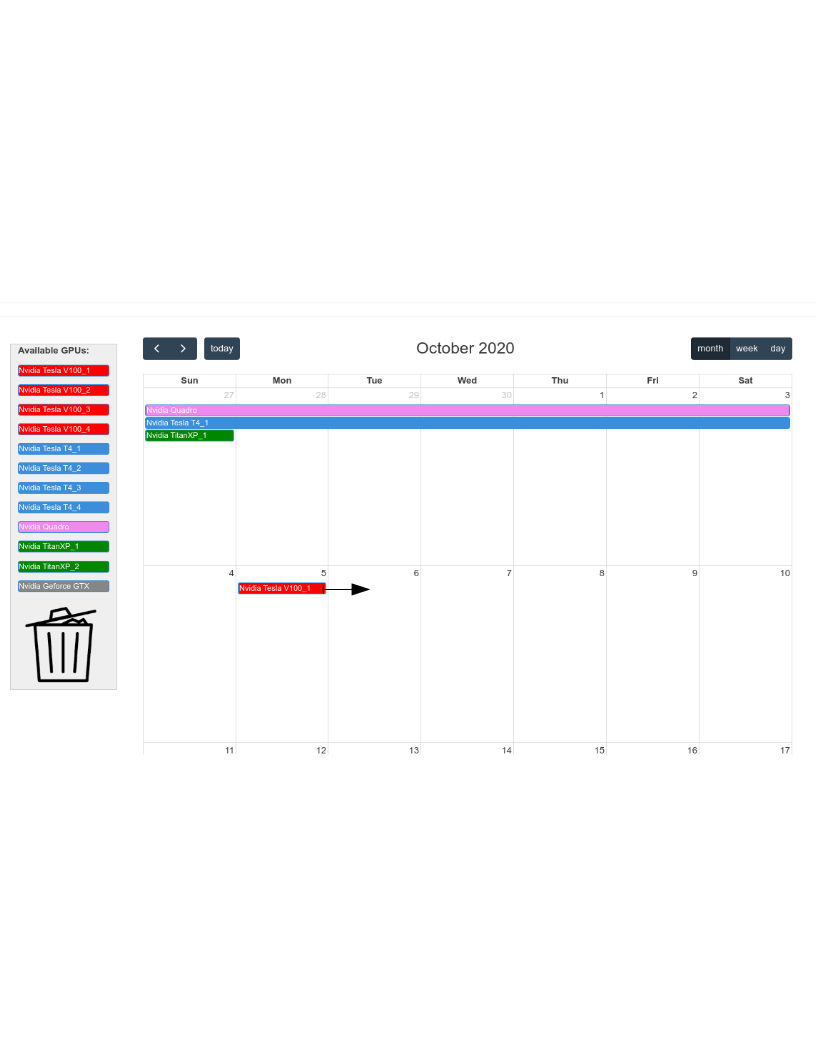

Using the mouse, you can then “enlarge” your reservation till the desired last day (October 9, in our example)

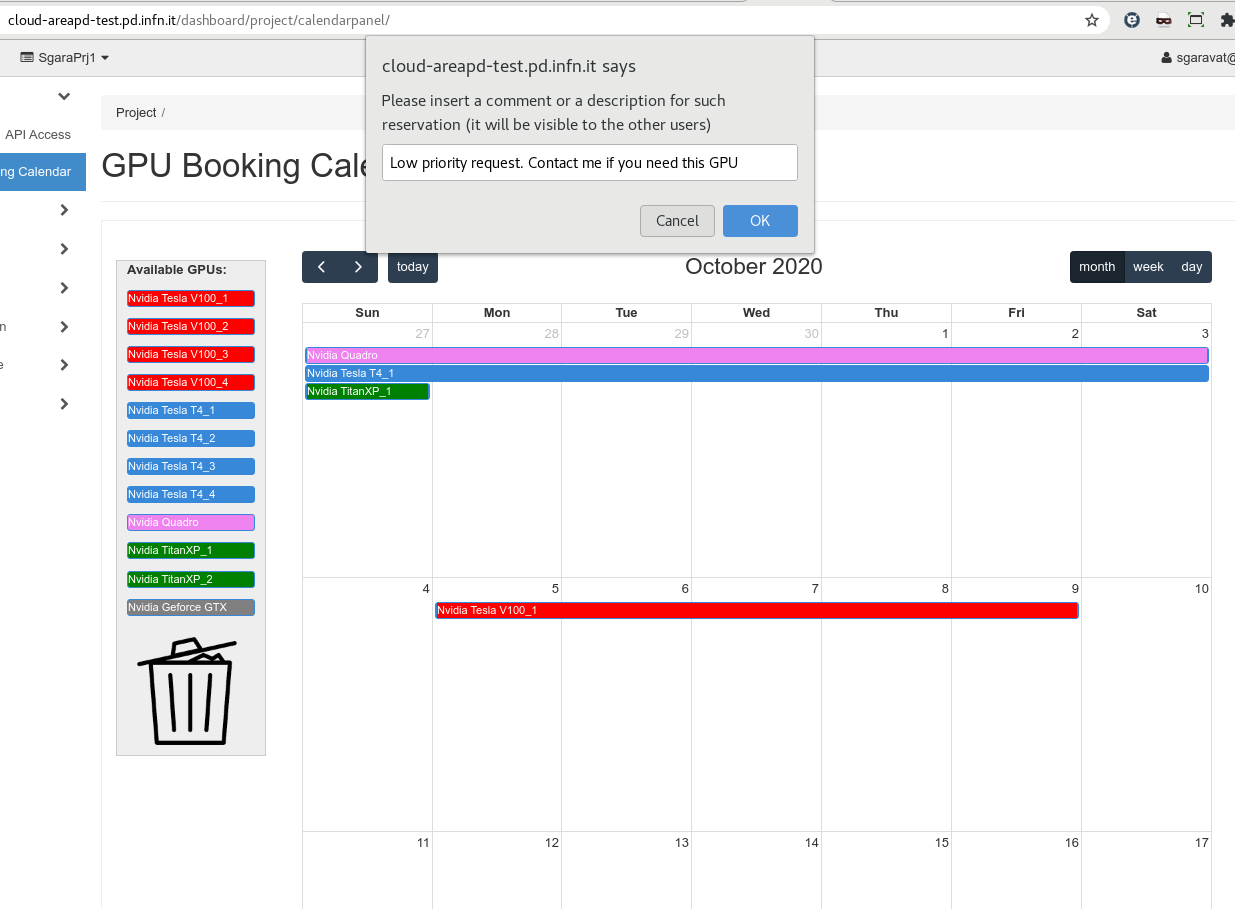

You may also associate a comment for this reservation (by clicking on it). The message can be seen by the other users.

Once you have reserved a GPU, you can proceed creating the relevant VM, as explained in the following section.

Warning

Please note that a reservation can be at most 15 days long and you may have at most 2 active reservations for a specific GPU.

Note

There must be a match between the username reported in the reservation and the username of the relevant virtual machine. Therefore the reservation must be done by the same user that will then create the virtual machine.

Warning

Please note that the members of the PhysicsOfData-students project have the priority on the 4 T4 GPUs each one coupled with 15 CPU cores. Other users can use such GPUs, but they must be released within 2 days if requested by other users with higher priority.

To delete a reservation, you simply need to move it to the trash bin.

Note

The reservation system that has been just described, is visible only to the projects that have access to the GPUs

8.2. Creating a GPU instance

The instructions to create a GPU instance are the very same for the creation of a ‘standard’ virtual machine (see Creating Virtual Machines). You will only have to pay attention to use one of these special flavors:

cloudveneto.46cores470GB25+1000GB1RTX6000

Flavor for an instance with 1 GPU Nvidia RTX PRO 6000 Blackwell Server Edition, 46 VCPUs, 470 GB of RAM, 25 GB of ephemeral root disk space, 1000 GB of extra ephemeral disk space.

cloudveneto.18cores50GB25GB1V100

Flavor for an instance with 1 GPU Nvidia V100, 18 VCPUs, 50 GB of RAM, 25 GB of ephemeral root disk space.

cloudveneto.36cores100GB25GB2V100

Flavor for an instance with 2 GPU Nvidia V100, 36 VCPUs, 100 GB of RAM, 25 GB of ephemeral root disk space.

cloudveneto.15cores90GB25G+500GB1T4

Flavor for an instance with 1 GPU Nvidia T4, 15 VCPUs, 90 GB of RAM, 25 GB of ephemeral root disk space, 500 GB of extra ephemeral disk space.

cloudveneto.8cores90GB25+2000GB1T4

Flavor for an instance with 1 GPU Nvidia T4, 8 VCPUs, 90 GB of RAM, 25 GB of ephemeral root disk space, 2000 GB of extra ephemeral disk space.

cloudveneto.30cores180GB25G+600GB2T4

Flavor for an instance with 2 GPUs Nvidia T4, 30 VCPUs, 180 GB of RAM, 25 GB of ephemeral root disk space, 600 GB of extra ephemeral disk space.

cloudveneto.16cores180GB25+4000GB2T4

Flavor for an instance with 2 GPUs Nvidia T4, 16 VCPUs, 180 GB of RAM, 25 GB of ephemeral root disk space, 4000 GB of extra ephemeral disk space.

cloudveneto.32cores360GB25+8000GB4T4

Flavor for an instance with 4 GPUs Nvidia T4, 32 VCPUs, 360 GB of RAM, 25 GB of ephemeral root disk space, 8000 GB of extra ephemeral disk space.

cloudveneto.8cores40GB25+500GB1Quadro

Flavor for an instance with 1 GPU Nvidia Quadro RTX 6000, 8 VCPUs, 40 GB of RAM, 25 GB of ephemeral root disk space, 500 GB of extra ephemeral disk space.

cloudveneto.8cores40GB25+400GB1TitanXP

Flavor for an instance with 1 GPU Nvidia Titan Xp, 8 VCPUs, 40 GB of RAM, 25 GB of ephemeral root disk space, 400 GB of extra ephemeral disk space.

cloudveneto.16cores80GB25+800GB2TitanXP

Flavor for an instance with 2 GPUs Nvidia Titan Xp, 16 VCPUs, 80 GB of RAM, 25 GB of ephemeral root disk space, 800 GB of extra ephemeral disk space.

cloudveneto.24cores120GB25+1200GB1Quadro2TitanXP

Flavor for an instance with 2 GPUs Nvidia Titan Xp, 1 GPU Quadro RTX 6000, 24 VCPUs, 120 GB of RAM, 25 GB of ephemeral root disk space, 1200 GB of extra ephemeral disk space.

cloudveneto.4cores20GB25+200GB1GeforceGtx

Flavor for an instance with 1 GPU Nvidia GeForce GTX TITAN, 4 VCPUs, 20 GB of RAM, 25 GB of ephemeral root disk space, 200 GB of extra ephemeral disk space.

Warning

When you snapshot or shelve an instance created using one of such flavors, please consider that only the root disk is saved. The content of the extra ephemeral disk is not saved !

Note

While some flavors allow the use of multiple GPUs, please note that the existing hardware is not optimized for GPU-to-GPU communications.

8.3. Storage for GPU instances

Flavors for GPU instances have a 20-25 GB ephemeral root disk.

Usually there is also a larger supplementary ephemeral disk (see here for more information) that can be used if more fast disk space is needed. This ephemeral storage is in fact usually implemented by fast (SSD/NVMe) disks, usually faster with respect than persistent volumes.

However this ephemeral storage doesn’t provide a high level of reliability. So please make sure that your important data are stored on persistent volumes (see Volumes).

Warning

Only the content of the ‘root disk’ is saved when you do a snapshot or a shelve of a VM. So if the instance was created using a flavor that has a supplementary ephemeral disk, the content of such disk is NOT saved and will be lost.

8.4. Images for GPU instances

You are responsible to create a “GPU ready” image (see User Provided Images and Building Images). or you can use a “standard” image and then install the the needed GPU drivers and software.

These instructions explain how to install CUDA toolkit and the NVIDIA drivers.

8.5. What do do when your reservation expires

When your reservation for a GPU expires, you need to delete it so that it can be used by other users.

If you need to use that VM again in the near future, a possibile approach is creating the VM from a volume (see Creating Virtual Machines from Volumes). When you need to release the GPU, you can delete the VM but the disk will be preserved (assuming that you specified ‘No’ for Delete Volume on Instance Delete at VM creation time). When you need again to use a GPU, after having successfully reserved it, you can create another VM using this volume.

Warning

Since there are known issues using shelving with virtual machines with GPUs, we do NOT recommend to use this functionality for releasing GPUs.

8.6. Monitoring

Unfortunately it is not straightforward to see which GPUs are being used and which ones are available using the CloudVeneto Openstack dashboard.

You can refer to this page for such information (please note that this page is updated every 30 minutes).

8.7. Policies

Please consider the following policies when using GPU instances:

GPU enabled flavors must be used only when a GPU is needed

Since there is a high request to use GPUs, please delete your instance as soon as you don’t need it anymore. This is because virtual machines, even if idle or in shutdown state, allocate resources (GPUs in particular) which therefore aren’t available to other users.

Once activated, your virtual instance is managed by you.

Before creating an instance using one or more GPUs, please register such allocation as explained here.

Instances for which there isn’t a reservation can be deleted by the Cloud administrators.

The projects HPC-Physics and CONVECS must be used only to instantiate virtual machines with GPUs.